Modeling approaches for cross-sectional integrative data analysis: Evaluations and recommendations

Abstract

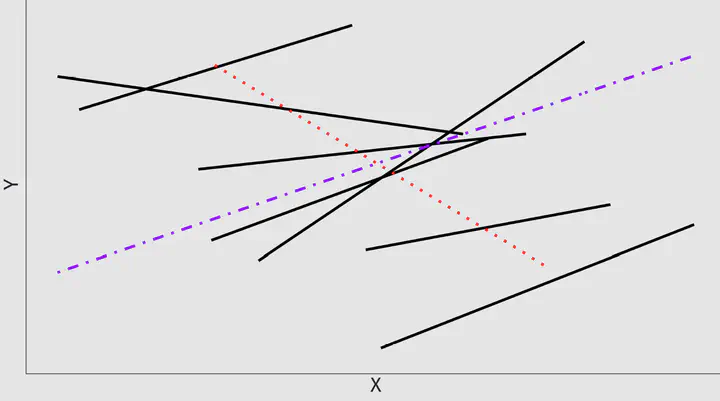

With the growing availability of open-access data from multiple psychological studies, appropriate statistical methods for synthesizing these data sets are needed. One approach, integrative data analysis (IDA), can jointly model participant-level and study-level data from multiple studies. In psychological science, IDA is typically conducted with fixed-effects or multilevel models (MLM). However, evaluations of the performance of these models in an IDA context are limited. We evaluate three fixed-effects regression models (aggregated vs. disaggregated vs. study-specific coefficients regressions) and two MLMs (fixed-slope vs. random-slopes MLM) for cross-sectional IDA. We evaluated estimation bias and Type I error rates for participant-level and study-level effects and variance components for these models in a simulation study under conditions consistent with applied IDA (e.g., 2–35 studies). For the MLMs, we evaluated different estimation methods (i.e., constrained vs. unconstrained variance estimation and five degrees of freedom methods). We found that two fixed-effects regression approaches — disaggregated and study-specific coefficients regressions — and both MLMs yielded fixed effects estimates with ignorable bias. However, only the random-slopes MLM fully modeled different sources of between-study heterogeneity and, consequently, provided well-controlled Type I error rates for testing both fixed effects when appropriate degrees of freedom methods were used. Furthermore, we found that MLMs could be feasibly estimated and tested under IDA conditions with three to six studies and well-chosen estimation methods. We illustrate and compare the five models in a real-data IDA example.